Dr. Rishabh Das

Dr. Rishabh Das is an Assistant Professor at the Scripps College of Communication, Ohio University. Dr. Das has over a decade of hands-on experience in operating, troubleshooting, and supervising control systems in the oil and gas industry. Dr Das's research portfolio includes virtualization of Industrial Control Systems (ICS), threat modeling, penetration testing in ICS, active network monitoring, and the application of Machine Learning (ML) in cybersecurity.

The use of GenAI is accelerating workflows in software programming. Soon, automation companies will integrate GenAI assistants to accelerate the programming capability of industrial devices. The results will be a faster workflow and will probably catalyze the learning curve for automation engineers. Although such integration comes with a lot of promise and efficiency, like every new technology, we need to navigate the supporting security concerns.

Potentially, the GenAI capabilities integrated into modern automation frameworks will be well thought out and secure. But what if a user starts using the publicly available GenAI model? Any data, message, or question posted on a public LLM can get shared, including confidential code, architecture diagrams, and information related to critical infrastructure. This can cause data leakage.

The last thing we need is an automation engineer sharing the whole ladder logic workflow with a GenAI and asking for recommendations. Like most theorized cybersecurity scenarios, it will happen, and we need to prepare for the worst.

The question is, how do we proactively detect such leakage? Passive network monitoring tools can continuously capture and identify suspicious communication supporting GenAI applications. If the security team happens to capture the correct data point during the leakage, the team can possibly perform deep packet inspection and investigate the impact of the leak.

With the integrations of GenAI-based capabilities, organizations should consider capturing network traffic at strategic network aggregation points, and track traffic flow from all critical departments. Specifically, the departments in direct contact with critical infrastructure.

This article looks at some artifacts that you can look into to detect and take proactive actions.

Simple yet effective: DNS Queries

Organizations should consider monitoring DNS queries. Identifying potential queries to the local DNS server will let the organizations pinpoint public GenAI users. If necessary, access to GenAI can be blocked from sensitive network segments or blocked altogether on the network. The organization can also pinpoint the users trying to use GenAI from the sensitive network segments and educate them about the acceptable and secure use of AI tools. These trainings can help users get educated on the pros and cons of GenAI and inform them about potential security risks.

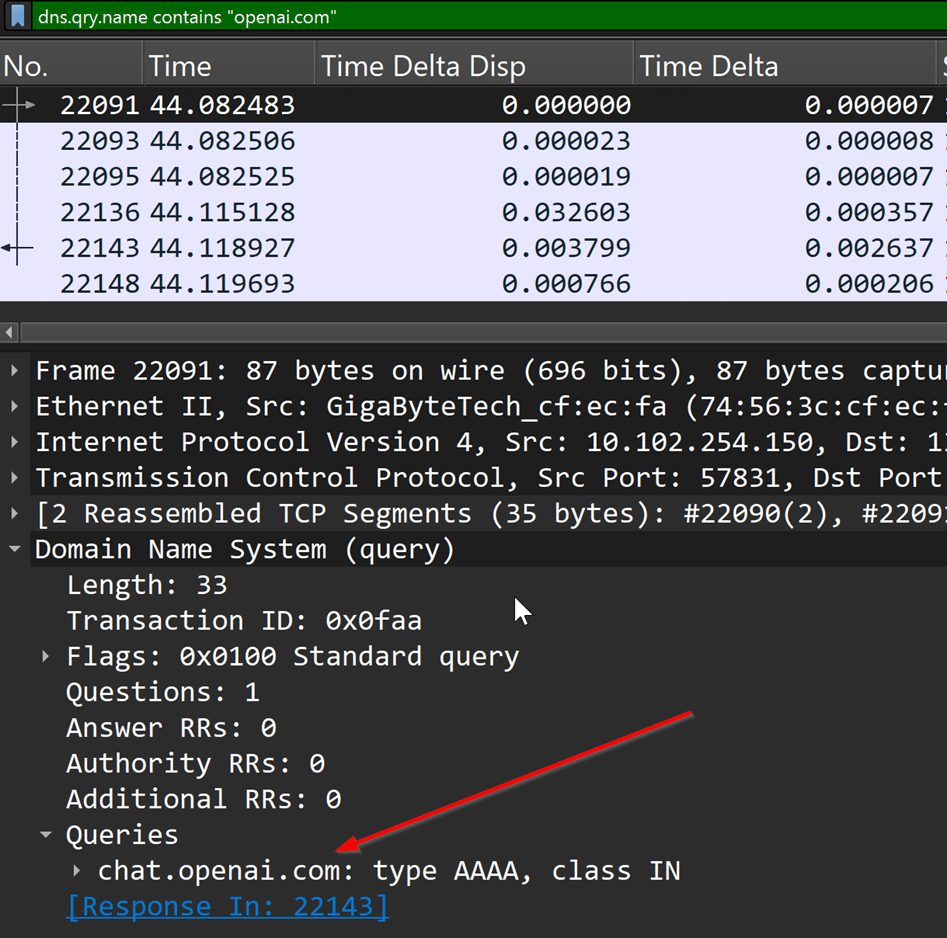

When an end-user tries to access a public LLM like Chatgpt, we can potentially detect those queries by looking for DNS A records for “openai.com.” For passive analysis, a security analyst can use a Wireshark filter and check if any users were trying to connect to ChatGPT.

A Wireshark filter to identify DNS queries to “openai.com” is:

dns.qry.name contains “openai.com”

A comprehensive list of known domains associated with GenAI usage is highlighted in this GitHub repository (https://github.com/laylavish/uBlockOrigin-HUGE-AI-Blocklist/blob/main/noai_hosts.txt). A security team can add these domains to their monitoring tool and potentially generate alerts when an end-user tries to access GenAI-based tools.

TLS Handshakes [TLS SNI]

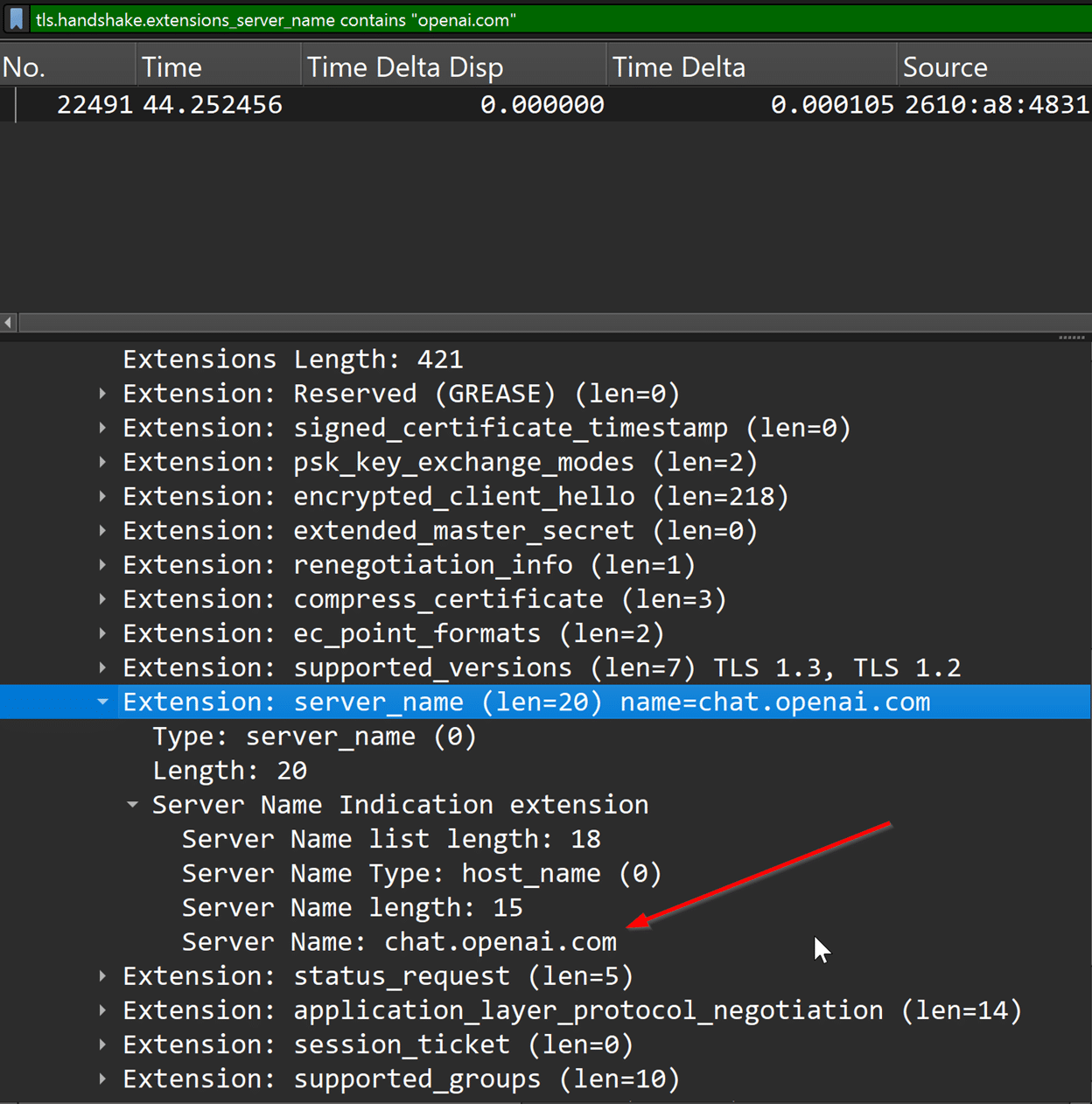

When multiple websites are hosted on one server and share a single IP address, and each website has its own SSL certificate, the server may not know which SSL certificate to show when a client device tries to securely connect to one of the websites. TLS Server Name Indication (SNI) solves this issue. SNI is a parameter in the TLS handshake process that ensures the client devices can see the correct SSL certificate for the website they are trying to reach. If a network capture contains TLS handshakes, the security analyst can check for SNIs and associate them with GenAI usage.

For captured traffic, we can use a Wireshark filter to check for ChatGPT usage using:

tls.handshake.extensions_server_name contains “openai.com.”

JA3S Fingerprinting

JA3S is a method for creating fingerprints of SSL/TLS clients. Unlike traditional TLS Fingerprinting, which analyzes various aspects of the TLS handshake, JA3S specifically focuses on the “ServerHello” packet sent in response to a client’s TLS handshake initiation. This packet contains details about the server’s TLS preferences. JA3s collects these details and compiles them into an MD5 hash, which serves as a consistent and identifiable signature for the server.

A real-time network monitoring tool can parse TLS server hello packets and fingerprint servers supporting GenAI applications. For example, if a network trace captures the communication between an end-user and the ChatGPT server, we can check the TLS Handshake extensions and fingerprint the JA3S server hash. The hash helps us deterministically fingerprint the GenAI supporting servers.

Automating using SNORT

Good old SNORT! Using the knowledge of TLS SNI and the DNS queries, we can craft a simple SNORT rule that can trigger automatically and generate alerts and logs related to GenAI usage. The following rule triggers an alert when the end user accesses Chatgpt.com.

alert udp any 53 -> $HOME_NET any (msg:”DNS request to ChatGPT”;content:”|07|chatgpt|03|com|00|”; fast_pattern; nocase; offset:12; depth:32; sid:100002)

You have detected GenAI traffic… What now?

GenAI integration will revolutionize the technology space. The key is to securely integrate the capabilities without compromising the confidentiality and privacy of critical infrastructure. Organizations should implement a robust network monitoring capability to detect and analyze network traffic. Staff should receive training on secure and responsible use of AI tools. The training should also outline the potential risks and benefits of these technologies. Besides training, organizations should restrict the use of generative AI to specific network segments.

With regular risk assessments and monitoring, critical infrastructure will remain secure and reliable.

Become a Subscriber

EMBEROT WILL NEVER SELL, RENT, LOAN, OR DISTRIBUTE YOUR EMAIL ADDRESS TO ANY THIRD PARTY. THAT’S JUST PLAIN RUDE.